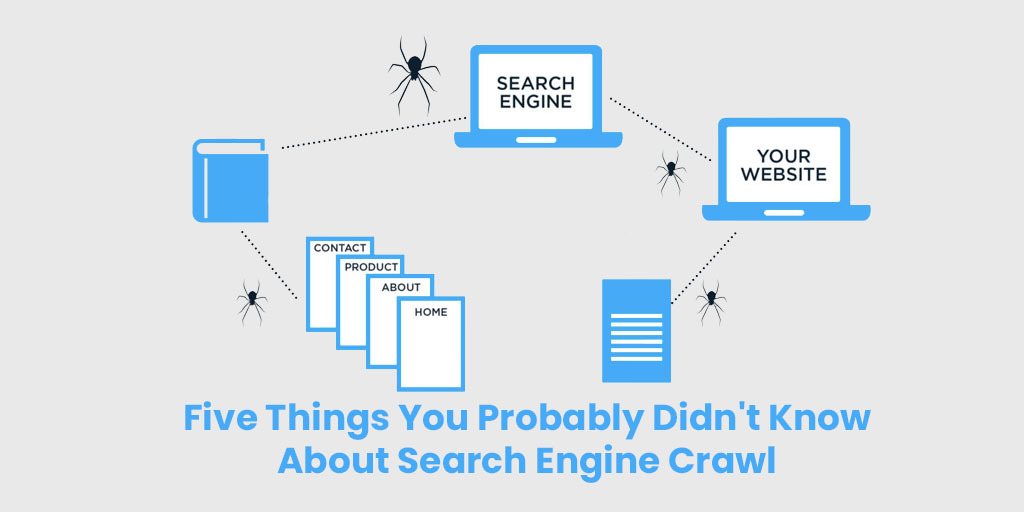

Having the beneficial things about Search Engine crawl assisted me to discover salient features and strongly recommend them for users. It too certainly assists in having several features which wholly benefit users. Primarily crawling is the process used by search engine web crawlers bots or spiders to visit and download a page and actively extract its links in order to discover additional pages.

Moreover, the pages known to the search engine are crawled periodically to determine whether any changes have been made to the page’s content since the last time it was actually crawled. Suppose if a search engine detects changes to a page after crawling a page, it would update its index in response to these detected changes.

How Does Web Crawling Work?

We learned that search engines use their own web crawlers to discover and access web pages. Moreover, all commercial search engine crawlers begin to crawl a website by downloading its robots.txt file, which contains rules about what pages search engines should or should not crawl on the website. It is enumerated that the robots.txt file may also contain information about sitemaps; this includes lists of URLs that the site wants a search engine crawler to crawl.

The studies say that search engine crawlers use a number of algorithms and rules to determine how frequently a page should be re-crawled and how many pages on a site should be indexed. For instance, a page that changes on a regular basis may be crawled more regularly than one that is rarely modified.

Everything You Need to Know About Crawling – GegoSoft SEO Services

It is the important process by which search engines discover updated content on the web, such as new sites or pages, changes to existing sites, and dead links.

In order to do this, a search engine uses a program that can be generally referred to as a ‘crawler’, ‘bot’ or ‘spider’ each search engine has its own kind which follows an algorithmic process to determine which sites to crawl and how often.

In addition as a search engine’s crawler moves through your site it will also detect and record any links it finds on these pages and add them to a list that will be crawled later. Altogether this is how new content is discovered.

How Can Search Engine Crawlers be identified?

It is illustrated that the search engine bots crawl a website can be identified from the user agent string that they pass to the web server when requesting web pages.

Here are a few instances of user agent strings used by search engines:

- Googlebot User Agent -Mozilla/5.0 (compatible; Googlebot/2.1; +https://www.google.com/bot.html)

- Bingbot User Agent – Mozilla/5.0 (compatible; bingbot/2.0; +https://www.bing.com/bingbot.htm)

- Baidu User Agent – Mozilla/5.0 (compatible; Baiduspider/2.0; +https://www.baidu.com/search/spider.html)

- Yandex User Agent – Mozilla/5.0 (compatible; YandexBot/3.0; +https://yandex.com/bots)

Thereby anyone can use the same user agent as those used by search engines. However, the IP address that made the request can also be actively used to confirm that it came from the search engine a process called a reverse DNS lookup.

Feature of Crawling images and other non-text files

Primarily the search engines will normally attempt to crawl and index every URL that they encounter. In case, if the URL is a non-text file type such as an image, video, or audio file, search engines will typically not be able to read the content of the file other than the associated filename and metadata.

Eventually, a search engine may only be able to extract a limited amount of information about non-text file types, they can still be indexed, rank in search results, and receive traffic.

Process of Crawling and Extracting Links from Pages

It is discovered that crawlers discover new pages by re-crawling existing pages they already know about, then extracting the links to other pages to find new URLs. Thereby these new URLs are added to the crawl queue so that they can be downloaded at a later date.

With this process of following links, search engines are able to discover every publicly-available webpage on the internet which is actually linked from at least one other page.

Work of Sitemaps

You have another way that search engines can discover new pages are by crawling sitemaps. The sitemaps included sets of URLs, and can be created by a website to provide search engines with a list of pages to be crawled. These can assist search engines find content hidden deep within a website and can offer webmasters with the immense ability to better control and actively understand the areas of site indexing and frequency.

About Page submissions

Similarly, individual page submissions can often be made directly to search engines via their respective interfaces. Thereby this manual method of page discovery can be used when new content is published on site, or if changes have taken place and you like to minimise the time that it takes for search engines to view the changed content.

Moreover the Google states that for large URL volumes you should use XML sitemaps, but also sometimes the manual submission method is more convenient when submitting a handful of pages. It is also crucial to note that Google limits webmasters to 10 URL submissions per day. In addition, Google convey that the response time for indexing is the same for sitemaps as individual submissions.

If you are having issues with crawling, indexing or rankings issues that you need help with. Get in touch with GegoSoft SEO Services they can help you.

GegoSoft is the best IT Services Provider in Madurai. We offer Cheap Web Hosting Services and also do web development services. Ready to work with reliable – Digital Marketing Services in Madurai

Our Success Teams are happy to help you.

We hope to enjoy you reading this blog post. Till you have any queries call our expert teams. Go ahead Schedule your Meeting talk with our experts to consult more.